There are too many people in this world that are unwilling to go the extra mile. Don’t be one of them. Microsoft has built time-proven GUI-based tools to work with active directory, task scheduling, SQL and other technologies, but PowerShell opens up these technologies and permits a great deal of flexibility while managing Microsoft applications.

Let’s take a look at an introductory statement made in a Microsoft technet article titled, “Scripting With Windows PowerShell” (found here ):

“Windows PowerShell is a task-based command-line shell and scripting language designed especially for system administration. Built on the .NET Framework, Windows PowerShell helps IT professionals and power users control and automate the administration of the Windows operating system and applications that run on Windows.”

Time to break this down:

“Windows PowerShell is a task-based command-line shell…”

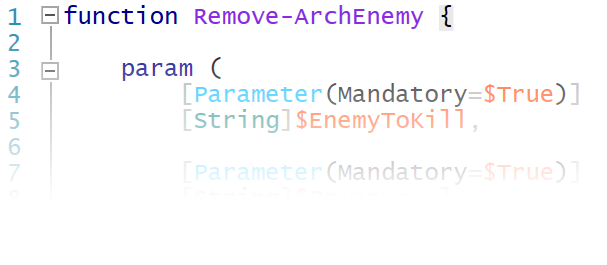

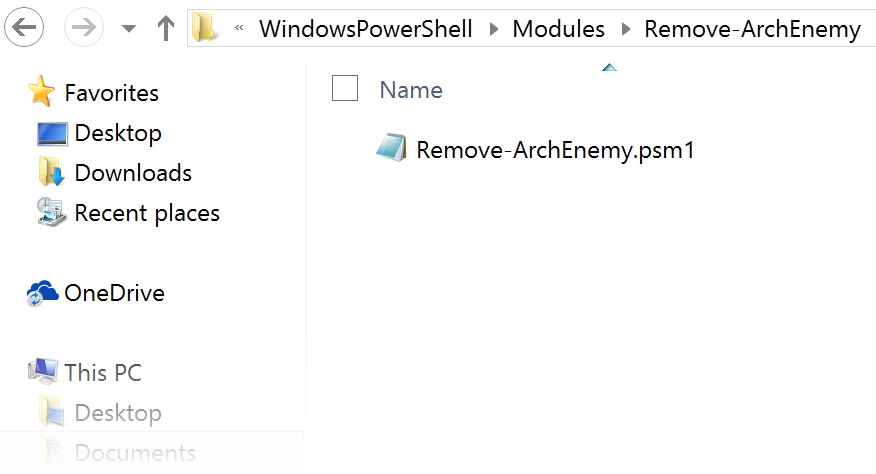

Yes, PowerShell is task-based. While this terminology may have no specific interpretation, let’s just say PowerShell was built to get work done. The evidence is in cmdlets (pronounced: command-lets). Cmdlets are the programs you run as commands in a Powershell script. These programs are issued at the console and are always in the form “Do THIS to THAT”. Simple, readable and efficient, these commands syntactically read <Verb>-<Noun>. Here are some examples:

Clear-History Export-CSV Move-Item

There are many cmdlets that are installed with every Windows instance. There are many others built by Microsoft and third parties that can be installed manually. You can even create and install your own. These cmdlets can also have aliases assigned to them. For example, ls is an alias to Get-ChildItem to give a directory listing. In short, PowerShell is built for productivity and has a relatively low learning curve to get you started.

Got it? Let’s move on.

“Built on the .NET Framework…”

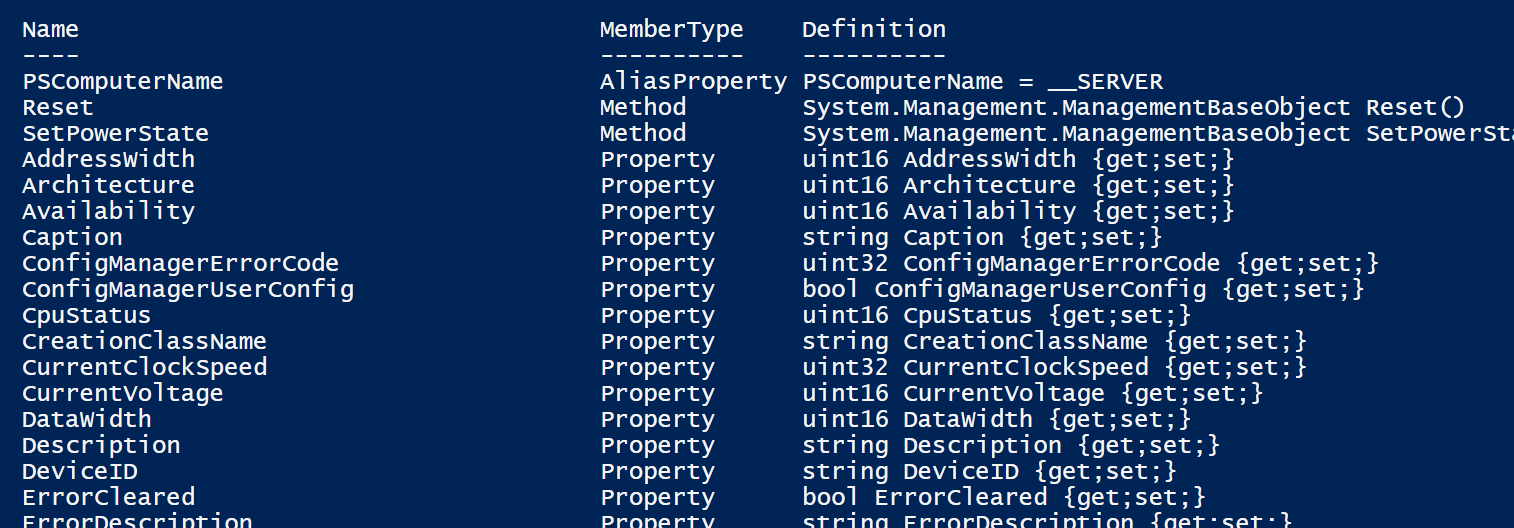

The power behind PowerShell, it’s ability to be applied to a wide range of scripting scenarios, lies in .NET. This is huge.

As a brief explanation, .NET is Microsoft’s development framework comprised of a large library (Framework Class Library or FCL) and a Common Language Runtime (CLR). The CLR enables multiple languages access to the FCL. There is no need to rewrite class libraries for different .Net languages. When PowerShell was introduced, it had been built upon the foundation of .NET just as Visual C# or Visual Basic .Net before it. This means that it had access to Microsoft’s core development library from the very beginning! This grants PowerShell unmatched flexibility as a management tool.

If you are truly a programming novice you may not be following. If this is the case I encourage you to pick up on the basics. Try Microsoft’s C# Fundamentals for Absolute Beginners. In order to truly appreciate PowerShell’s place as a .Net technology you will need to understand a bit about .NET and object oriented programming. You don’t need to become a developer, but I have seen C# developers mold and bend PowerShell in ways that have really impressed me. I want to set you up to learn how your tools accomplish your work. Doing so will up your administrative game and get you the professional brawn you’ve been looking for. I have always believed that if you want to become a great SysAdmin or Engineer, you must understand basic development and programming concepts.

“Windows PowerShell helps IT professionals and power users control and automate…”

Why learn PowerShell? I can boil it down to one word: automation. PowerShell will empower you by granting the ability to perform tasks quickly and efficiently. It only takes some additional effort up front to create tools that will save time, money and your sanity. Creating your own tools to solve the problems you face on a daily basis will boost your confidence and build your portfolio. You can also share, perfect and use these tools in the future. PowerShell may be relatively new, but it was desperately needed, and it is very powerful. Learn it, love it, and become more than the average Windows admin. Yes, you’re working yourself out of a job …and into a better one. In the end your manager –and your career– will thank you.

If your on the fence about PowerShell, get off and become a power user. Dig in and have fun!

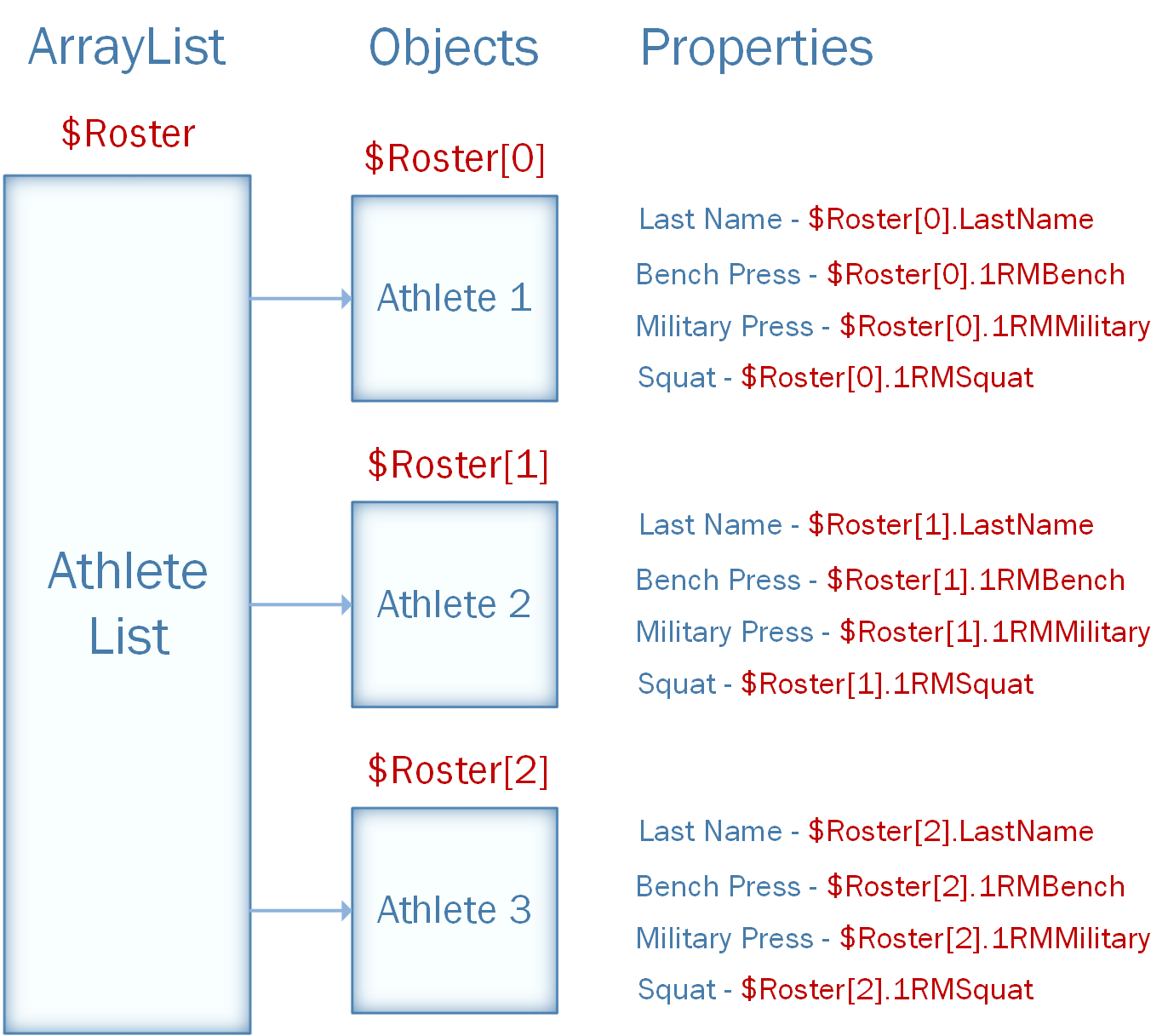

If you’ve built scripts in Linux environments check out PowerShell Objects Part 1: No More Parsing! to see how PowerShell differs from the languages you may be accustomed to.